About eleven years ago, in January of 2008, the New England Journal of Medicine published a perspective piece on direct to consumer genetic tests, “Letting the Genome out of the Bottle, Will We Get Our Wish.” The article begins by describing an “overweight” patient who “does not exercise.” This man’s children have given him the gift of a direct to consumer genetics service at the bargain price of $1,000.

The obese person who (did we mention) can’t be troubled to go to the gym is interested in medical advice based on the fact that they have SNPs associated with both diabetes and cardiovascular disease. The message is implied in the first paragraph, and explicitly stated in the last: “Until the genome can be put to useful work, the children of the man described above would have been better off spending their money on a gym membership or a personal trainer so that their father could follow a diet and exercise regimen that we know will decrease his risk of heart disease and diabetes.”

Get it? Don’t bother us with data. We knew the answer as soon as your heavy footfalls sounded in the hallway. Hit the gym.

The authors give specific advice to their colleagues “for the patient who appears with a genome map and printouts of risk estimates in hand.” They suggest dismissing them: “A general statement about the poor sensitivity and positive predictive value of such results is appropriate … For the patient asking whether these services provide information that is useful for disease avoidance, the prudent answer is ‘Not now — ask again in a few years.'”

Nowhere do the authors mention any potential benefit to taking a glance at the sheaf of papers this man is clutching in his hands.

Just 10 years ago, a respected and influential medical journal told primary care physicians to discourage patients from seeking out information about their genetic predisposition to disease. Should someone have the nerve to bring a “printout,” they advise their peers to employ fear, uncertainty, and doubt. They suggest using some low-level statistical jargon to baffle and deflect, before giving answers based on a population-normal assumption.

The reason I’m writing this post is because I went to the doctor last week and got that exact answer, almost verbatim. I already went off about this on twitter. I’m writing this because I think that it may benefit from a more nuanced take.

More on that at the end of the post.

Eight bits of history

For all its flaws, the article does serve as a fun and accessible reminder of how far we have come a decade.

I did 23andme when it first came out. I’ve downloaded my data from them a bunch of times. Here are the files that I’ve downloaded over the years, along with the number of lines in each file:

| cdwan$ wc -l genome_Christopher_Dwan_* 576119 genome_Christopher_Dwan_20080407151835.txt 596546 genome_Christopher_Dwan_20090120071842.txt 596550 genome_Christopher_Dwan_20090420074536.txt 1003788 genome_Christopher_Dwan_Full_20110316184634.txt 1001272 genome_Christopher_Dwan_Full_20120305201917.txt |

The 2008 file contains about 576,000 data points. That doubled to a bit over a million when they updated their SNP chip technology in 2011.

The authors were concerned that “even very small error rates per SNP, magnified across the genome, can result in hundreds of misclassified variants for any individual patient.” When I noticed that my results from the 2009 download were different from those in 2008, I wrote a horrible PERL script to figure out the extent of the changes. I still had compare.pl sitting around on my laptop, so ran it again today. I was somewhat shocked that it worked on the first try, a decade and at least two laptops later!

My 23andme results were pretty consistent. Of the SNPs that were reported in both v1 and v2, my measurements differ at a total of 54 loci. That’s an error rate of about one hundredth of one percent. Not bad at all, though certainly not zero.

For comparison, consider the height and weight that usually gets taken when you visit a doctor’s office. In my case, they do these measurements with shoes and clothing on – meaning that I’m an inch taller (winter boots) and about 8 pounds heavier (sweater and coat) if I see my doctor in the winter. Those are variations of between 1% and 5%.

Fortunately, nobody ever looks at adult height or weight as measured at the doctor’s office. They put us on the scale so that the practice can charge our insurance providers for a physical exam, and then the doctor eyeballs us for weight and any concealed printouts.

A data deluge

Back to genomics: $1,000 will buy a truly remarkable amount of data in late 2018. I just ordered a service from Dante Labs that offers 30x “read depth” on my entire genome. They commit to measure each of my 3 billion letters of DNA at least 30 times. Taken together, that’s 90 billion data points, or 180,000 times more measurements than that SNP chip from a decade ago. Of course, there’s a strong case to be made that those 30 reads of the same location are experimental replicates, so it’s really only 3 billion data points or 6,000 times more data. Depending on how you choose to count, that’s either 12 or 17 doublings over a ten year span.

Either way, we’re in a world where data production doubles faster than once per year.

This is a rough and ready illustration of the source of the fuss about genomic data. Computing technology, both CPU and storage, seems to double in capacity per dollar every 18 months. Any industry that exceeds that tempo for a decade or so is going to experience growing pains.

To make the math simple, I omitted the fact that this year’s offering -also- gives me an additional 100x of read depth within the protein coding “exome” regions, as well as some even deeper reading of my mitochondrial DNA.

One real world impact of this is that I’m not going to carry around those raw reads on my laptop anymore. The raw files will take up a little more than 100 gigabytes, which would be. 20% of my laptop hard disk (or around 150 CD ROMs).

I plan to use the cloud, and perhaps something more elegant than a 10 year old single threaded PERL script, to chew on my new data.

The more things change

Back to the point: I’m writing this post because, here in late 2018, I got the -exact- treatment that the 2008 article recommends. It’s worse than that, because I didn’t even bring in anything as fuzzy as genotypes or risk variants. Instead, I brought lab results, ordered through Arivale, and generated by a Labcorp facility to HIPAA standards.

I’ve written about Arivale before. They do a lab workup every six months. That, coupled with data from my wearable and other connected devices provides the basis for ongoing coaching and advice.

My first blood draw from Arivale showed high levels of mercury. I adjusted my diet to eat a bit lower on the food chain. When we measured again six months later, my mercury levels had dropped by 50%. However, other measurements related to inflammation had doubled over the same time period. Everything was still in the “normal” range – but a fluctuation of a factor of two struck me as worth investigating.

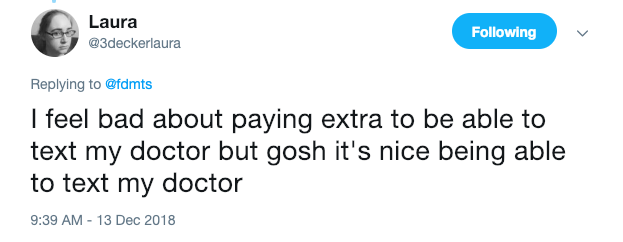

I use one of those fancy medical services where, for an -additional- out-of-pocket annual cost, I can use a web or mobile app to schedule appointments, renew prescriptions, and even exchange secure messages with my care team. Therefore, I didn’t have to do anything as undignified as bringing a sheaf of printouts to his upscale office on a high floor of a downtown Boston office building. Instead, I downloaded a PDF from Arivale and sent them as a message with my appointment request.

When we met, my physician had printed out the PDFs. Perhaps this is part of that “digital transformation” I’ve heard so much about. The 2008 article is studiously silent on the topic of doctors bearing printouts. I’m guessing it’s okay if they do it.

Anyway, I had the same question as the obese, exercise-averse patient who drew such scorn in the 2008 article: Is there any medical direction to be had from this data?

My physician’s answer was to tell me that these direct to consumer services are “really dangerous.” He gave me the standard line about how all medical procedures, even minimally invasive ones, have associated risks. We should always justify gathering data in terms of those risks, at a population level. He cautioned me that going down the road of even looking at elevated inflammation markers can lead to uncomfortable, unnecessary, and ultimately dangerous procedures.

Thankfully, he didn’t call me fat or tell me to go get a gym membership.

This, in a nutshell is our reactive system of imprecision medicine.

This is also an example of our incredibly risk averse business of medicine, where sensible companies will segment and even destroy data to avoid the danger of accidentally discovering facts that they might be obligated to report or to act on.

This, besides the obvious profit motive, is why consumer electronics and retail outfits like Apple and Amazon are “muscling into healthcare.”

The void does desperately need to be filled, but I think it’s pretty terrible that the companies best poised to exploit the gap are the ones most ruthlessly focused on the bottom line, most extractive in their runaway capitalism, and who have histories of terrible practices around both labor and of privacy.

A happy ending, perhaps

I really do believe that there is an opportunity here: A chance to radically reshape the practice of medicine. I’m a genomics fanboy and a true believer in the power of data.

To be clear, the cure is not any magical app. The transformation will not be driven simply by encoding our data as XML, JSON, or some other format entirely. No specific variant of machine learning or artificial intelligence is going to un-stick this situation.

It’s not even blockchain.

The answer lies in a balanced approach, with physicians being willing to use data driven technologies to amplify their own senses, to focus their attention, to rapidly update their recommendations and practices, and to measure and adjust follow ups and follow throughs.

To bring it back to our obese patient above, consider the recent work on polygenic risk scores, particularly as they relate to cardiovascular health. A savvy and up-to-date physician would be well advised to look at the genetics of their patients – particularly those of us who don’t present as a perfect caricature of traditional risk-factors for heart disease.

I’ve written in the past about another physician who sized me up by eyeball and tried to reject my request for colorectal cancer screening, despite a family history, genetic predisposition, and other indications. “You look good,” he said, “are you a runner?”

There is a saying that I keep hearing: “Artificial Intelligence will not replace physicians. However, physicians who use Artificial Intelligence will replace the ones who do not.”

The same is true for using all the data available. In my opinion, it is well past time to make that change.

I would love to hear what you folks think.

Thanks for the great post Chris.

Admittedly oversimplified “solution” – part shared values (please see http://dibiase1.com/2019/01/11/valuing-our-time-together/), part strict discipline, part analysis of heredity & accumulated mutations (across multi-omics), part managing social connections, part re-investing wealth …

TODO finish my response (over beers ;^)